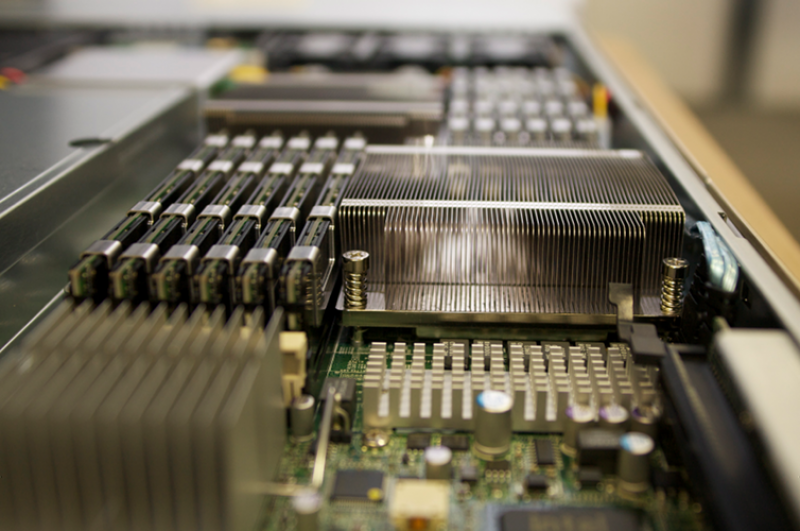

Infrastructure

A HPC centre is about more than just supercomputers. As well as the workhorse clusters which support the numerical simulations and data analysis required for scientific research, a range of additional supporting hardware and software services is required. The menu on the left contains links to some of the specialist HPC infrastructure hosted by ICHEC currently and in the past. Further details of accessing Ireland's HPC National Services and academic resources can be found here.